For running an LED off of a 3.7V nominal Li-Ion rechargeable battery (4.2V-2.7V) I have been using 0.12V drop 350mA Linear regulators in parallel combinations for differerent drive currents.

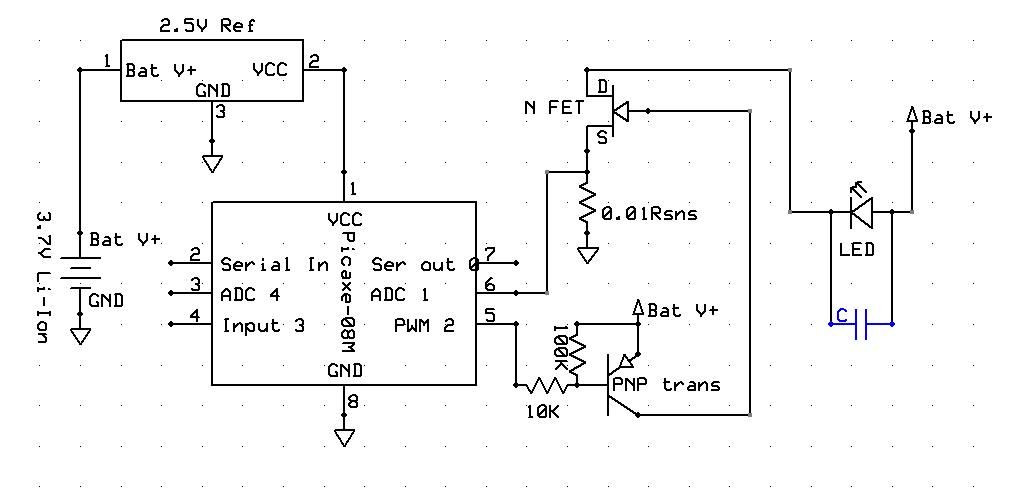

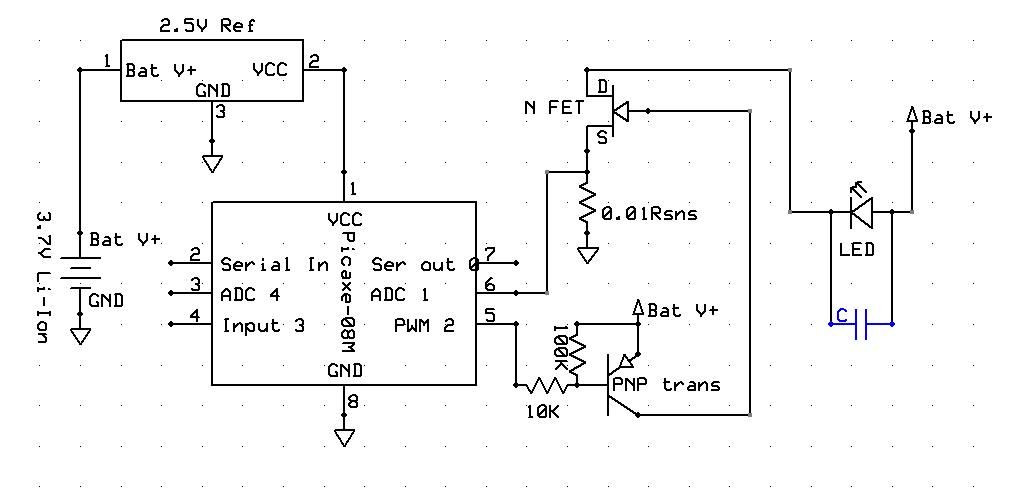

I had an idea though, now that I've learned how FETs actually work from the switch that I made, to build a PWM/Direct drive LED driver. The plan is to supply the picaxe with a constant 2.5V from the reference so it can accurately measure the voltage drop across the current sense resistor.

By measuring the voltage and determining the instantaneous current flowing, I want to use PWM to lower the average current to LED spec, and then have the duty cycle increase as battery voltage and current drop, to maintain average current, until battery voltage drops to LED voltage, the PWM duty cycle goes to 100%, and the LED is directly driven from the battery through the FET.

So for instance, if I'm using an LED with a voltage drop of ~3.6V@5A and the fresh battery voltage sags to 3.7V under a 5A load (typical) The LED will be seeing about 10A, so the Picaxe would run the FET at 50% duty cycle to start.

A capacitor on the output would lower the ripple current on the LED during the PWM phase, increasing it's efficiency, I'd have to find out what frequency I want to use to determine how big the capacitor should be and such, I didn't include it in the schematic.

So, does this sound feasible? I'm sure there would be trial and error programming and such, and quite a few questions from me along the way, but if I'm on to something that will actually work here and not just some useless hair-brain scheme then I'd like to give it a try, I think I'd learn quite a bit.

I had an idea though, now that I've learned how FETs actually work from the switch that I made, to build a PWM/Direct drive LED driver. The plan is to supply the picaxe with a constant 2.5V from the reference so it can accurately measure the voltage drop across the current sense resistor.

By measuring the voltage and determining the instantaneous current flowing, I want to use PWM to lower the average current to LED spec, and then have the duty cycle increase as battery voltage and current drop, to maintain average current, until battery voltage drops to LED voltage, the PWM duty cycle goes to 100%, and the LED is directly driven from the battery through the FET.

So for instance, if I'm using an LED with a voltage drop of ~3.6V@5A and the fresh battery voltage sags to 3.7V under a 5A load (typical) The LED will be seeing about 10A, so the Picaxe would run the FET at 50% duty cycle to start.

A capacitor on the output would lower the ripple current on the LED during the PWM phase, increasing it's efficiency, I'd have to find out what frequency I want to use to determine how big the capacitor should be and such, I didn't include it in the schematic.

So, does this sound feasible? I'm sure there would be trial and error programming and such, and quite a few questions from me along the way, but if I'm on to something that will actually work here and not just some useless hair-brain scheme then I'd like to give it a try, I think I'd learn quite a bit.

Last edited: